HTML and SVG Generation

Features preliminary support for HTML webpage and SVG graphics code generation.

7B model with only 14 layers, 14B with 28 layers (vs. 40B's 80 layers), dramatically reducing inference latency to real-time interaction levels.

Successfully deployed and tested on ClaudeCode and OpenCode platforms, supporting command-line interactive programming assistance.

Preliminary HTML webpage and SVG graphics generation capabilities for rapid visual application development.

A new family of code large language models (LLMs) designed to advance autonomous software engineering and code intelligence.

Features preliminary support for HTML webpage and SVG graphics code generation.

Moving beyond static code representations, our models learn from repository evolution patterns, commit transitions, and dynamic code transformations to understand real-world software development processes.

Bifurcated post-training delivers two specialized variants—Thinking models (utilizing reasoning-driven RL for complex problem-solving) and Instruct models (optimized for general coding assistance and instruction-following).

The IQuest-Coder-V1-Loop variant introduces a recurrent mechanism that optimizes the trade-off between model capacity and deployment footprint. The 7B and 14B models adopt shallow architectures for faster inference speed.

All models natively support up to 128K tokens without requiring additional scaling techniques.

Demonstrates initial deployment capabilities on ClaudeCode and OpenCode platforms, with the ability to integrate into CLI-based agent workflows.

Achieves leading results on SWE-Bench Verified, BigCodeBench, LiveCodeBench v6, and other major coding benchmarks, surpassing competitive models across agentic software engineering, competitive programming, and complex tool use.

40B-Loop-Thinking is a research-oriented experimental prototype for exploring how structural chain-of-thought and procedural chain-of-thought can cooperate in one system.

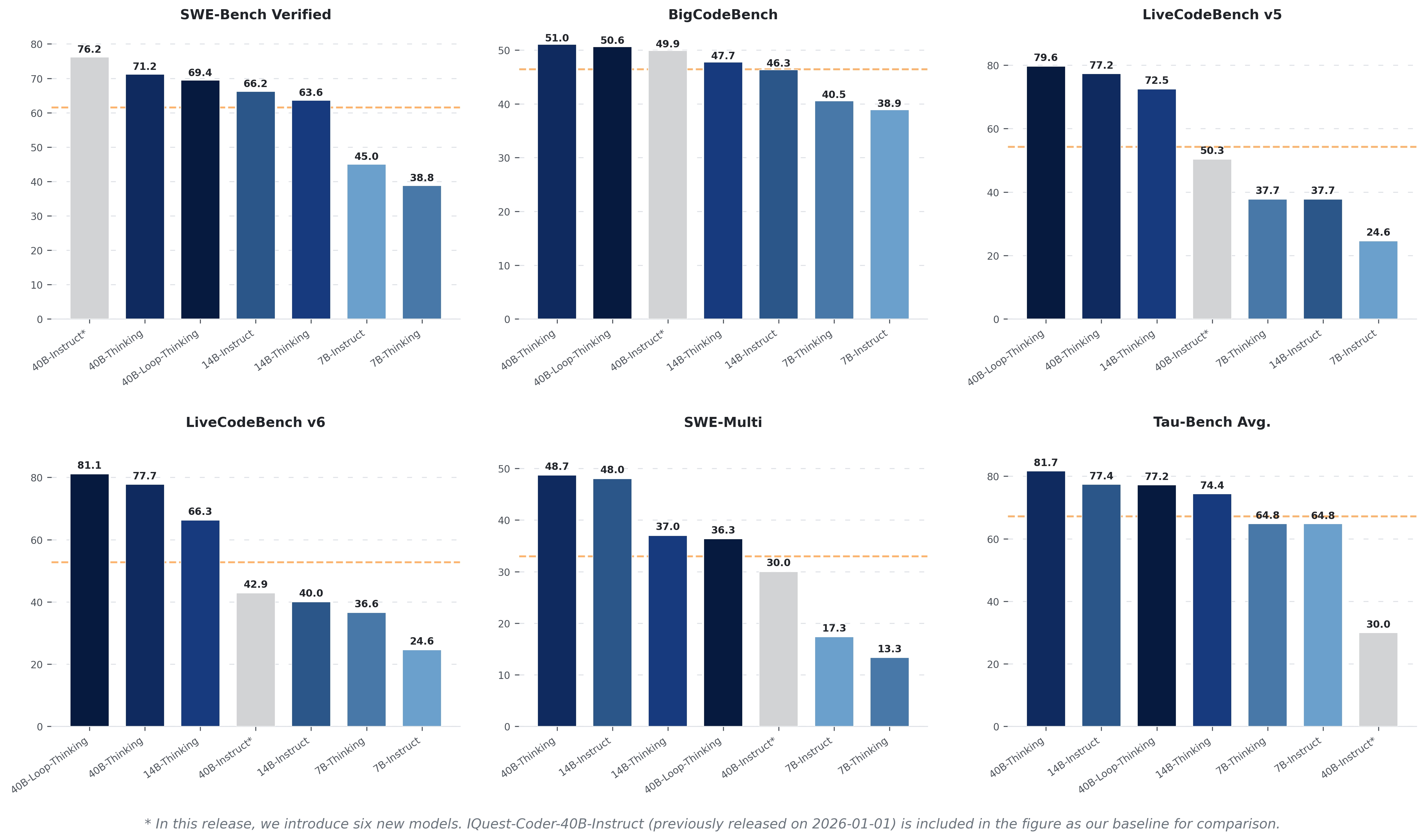

Performance snapshot across SWE-Bench Verified, BigCodeBench, LiveCodeBench, SWE-Multi, and Tau-Bench.

Complete benchmark results across all model sizes from model-performance data.

| Model | BigCodeBench (Full) |

BigCodeBench (Hard) |

HumanEval | HumanEval+ | MBPP | MBPP+ |

|---|---|---|---|---|---|---|

| 7B-Instruct | 38.86 | 22.97 | 79.90 | 73.20 | 73.50 | 63.50 |

| 7B-Thinking | 40.53 | 19.59 | 76.80 | 70.70 | 76.50 | 62.40 |

| 14B-Instruct | 46.32 | 26.35 | 83.50 | 78.70 | 79.60 | 68.50 |

| 14B-Thinking | 47.72 | 23.65 | 92.70 | 86.00 | 90.50 | 72.00 |

| 40B-Thinking | 51.05 | 29.05 | 93.90 | 87.80 | 91.00 | 75.10 |

| 40B-Loop-Thinking | 50.61 | 29.73 | 97.60 | 89.60 | 91.00 | 76.20 |

| Model | CruxEval (Input) |

CruxEval (Output) |

CodeArena (Score) |

CodeArena (Win Rate %) |

CodeArena (Tie Rate %) |

|---|---|---|---|---|---|

| 7B-Instruct | 45.80 | 54.20 | 0.37 | 31.28 | 10.77 |

| 7B-Thinking | 57.60 | 81.50 | 0.28 | 23.33 | 10.00 |

| 14B-Instruct | 52.60 | 57.60 | 0.65 | 60.00 | 10.77 |

| 14B-Thinking | 80.50 | 90.60 | 0.72 | 67.44 | 9.49 |

| 40B-Thinking | 87.40 | 94.00 | 0.92 | 88.97 | 5.13 |

| 40B-Loop-Thinking | 76.50 | 75.20 | 0.65 | 59.23 | 11.54 |

| Model | LiveCodeBench (v5) |

LiveCodeBench (v6) |

Multiple (Avg) |

Python | JavaScript | Java | C++ | TypeScript |

|---|---|---|---|---|---|---|---|---|

| 7B-Instruct | 24.55 | 24.57 | 66.15 | 82.30 | 73.30 | 63.30 | 65.20 | 78.00 |

| 7B-Thinking | 37.72 | 36.57 | 53.66 | 74.40 | 64.00 | 53.80 | 48.40 | 66.00 |

| 14B-Instruct | 37.72 | 40.00 | 71.24 | 81.10 | 77.00 | 69.00 | 79.50 | 76.10 |

| 14B-Thinking | 72.46 | 66.29 | 67.09 | 86.00 | 76.40 | 66.50 | 67.70 | 76.10 |

| 40B-Thinking | 77.25 | 77.71 | 75.39 | 89.00 | 83.20 | 81.00 | 74.50 | 78.00 |

| 40B-Loop-Thinking | 79.64 | 80.00 | 80.26 | 89.00 | 88.20 | 86.70 | 84.50 | 83.60 |

| Model | Terminal-Bench (v1) |

Terminal-Bench (v2) |

SWE-Verified | Multi-SWE |

|---|---|---|---|---|

| 7B-Instruct | 22.50 | 11.23 | 45.00 | 17.33 |

| 7B-Thinking | 21.25 | 6.89 | 38.80 | 13.33 |

| 14B-Instruct | 36.25 | 16.85 | 66.20 | 48.00 |

| 14B-Thinking | 26.25 | 14.10 | 63.60 | 37.00 |

| 40B-Thinking | 30.00 | 22.30 | 71.20 | 48.67 |

| 40B-Loop-Thinking | 30.00 | 18.80 | 69.40 | 36.33 |

| Model | BFCL (v3) | Tau-Bench-2 (Airline) |

Tau-Bench-2 (Retail) |

Tau-Bench-2 (Telecom) |

Mercury (Beyond@1) |

Mercury (Pass@1) |

|---|---|---|---|---|---|---|

| 7B-Instruct | 34.02 | 59.18 | 49.12 | 85.96 | 42.12 | 50.39 |

| 7B-Thinking | 43.34 | 52.00 | 65.49 | 76.99 | 43.24 | 53.52 |

| 14B-Instruct | 55.10 | 70.00 | 78.07 | 84.21 | 63.29 | 76.17 |

| 14B-Thinking | 53.59 | 59.18 | 76.32 | 87.72 | 61.99 | 74.22 |

| 40B-Thinking | 64.18 | 66.00 | 87.72 | 91.23 | 71.14 | 83.20 |

| 40B-Loop-Thinking | 61.57 | 64.00 | 78.07 | 89.47 | 79.61 | 94.92 |

Live demonstrations of 7B/14B models' capabilities: CLI agent integration, HTML generation, and SVG graphics.

Demos 1-4: Claude Code Integration | Demo 5: OpenClaw Computer Use

多agent系统修复 - Task 1: 修复上下文截断 Bug → 代码阅读、Bug 定位、数据结构理解 | Task 2: 参数校验 + 新工具 → 功能实现、安全意识、Schema 处理

赛博朋克风格的像素跑酷游戏 - 躲避障碍物,获得高分

生成一个神经网络可视化页面,单文件 HTML,使用 Three.js:分层神经网络结构,信号粒子流动传播,节点激活级联,赛博朋克风格 UI

用svg画一个赛博朋克的雷达波纹

生成一个静态 SVG,展示太空射击游戏的 HUD 界面 - 星空背景、敌机锁定框、生命值条、弹药计数、雷达圆盘

能量核心 - 科幻风格抽象几何图形。中心发光圆环,外围旋转圆形,最外层轨道小圆点,青紫渐变辉光效果,多层不同速度旋转动画